Wise Data Science

When Money has No Borders, Your Data Can't Either

Fraud data science rarely fails because the team can’t build a model. It fails because the “model-ready dataset” is a moving target: production systems evolve, definitions drift between services and analytics, and quick fixes pile up until feature pipelines become brittle, slow, and expensive.

At Wise, we tackled that problem by moving data preparation upstream—pushing feature gathering, standardization, and correctness guarantees upstream—so data scientists could iterate quickly without rebuilding fragile transformation logic for every model.

This is the story of what changed, why it worked, and the engineering patterns that made the improvement durable.

The original problem: training data didn’t match production reality

Two failure modes showed up early and often:

1. Production vs analytics misalignment

The same “feature” could mean slightly different things depending on where it was computed, joined, or typed.

2. A dependency bottleneck

Data scientists were blocked whenever they needed new fields, new joins, or fixes—because the logic lived elsewhere, owned elsewhere, and changed on a different cadence.

The first instinct is usually reasonable: “just use production data to train.”

That helps, but it doesn’t automatically solve point-in-time correctness, discoverability, and repeatability of training sets—especially when the data comes from many systems.

The trap: building a custom transformation layer (and then maintaining it forever)

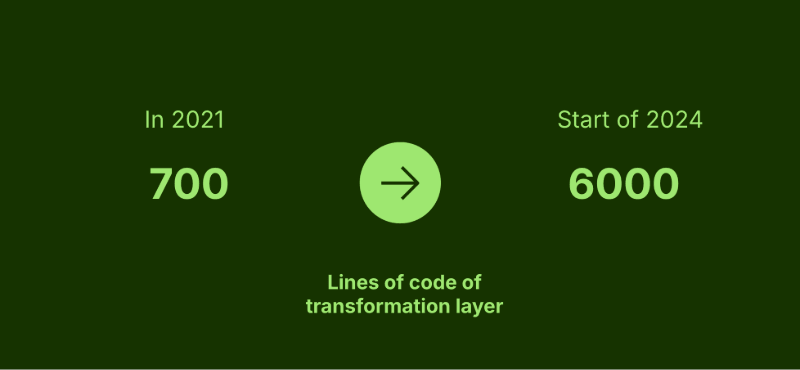

When teams are blocked, they build an abstraction layer to “automate it.” That does remove some dependency friction, but it introduces a new long-term cost: a fragile transformation layer that grows faster than anyone expects.

Two things made it especially painful:

Output shape mismatch: what production emits is not what data science needs.

Type/precision drift: even values that look identical aren’t necessarily identical once types and casting enter the picture (e.g.,

1234vs1234.00).

Over time, the transformation layer becomes a magnet for “just unblock us” patches. Those patches tend not to be a business priority to clean up—until they cause incidents.

The growth curve tells the story: ETL logic expanded from hundreds of lines to thousands as requirements accumulated and edge cases multiplied.

Challenges within the domain that needs to be handled

Point-in-time correctness

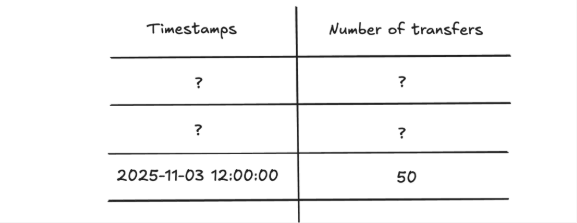

As customers interact with the system, their associated feature values are continuously updated to reflect new behaviors, transactions, and events. Because these updates happen over time, the same feature can have significantly different values depending on the exact moment it is queried or evaluated.

For machine learning training, it is essential that each feature value reflects exactly what was known at that specific moment in time — and nothing that became known later. Failing to do so introduces data leakage and can cause models that perform well during training to have low accuracy in production.

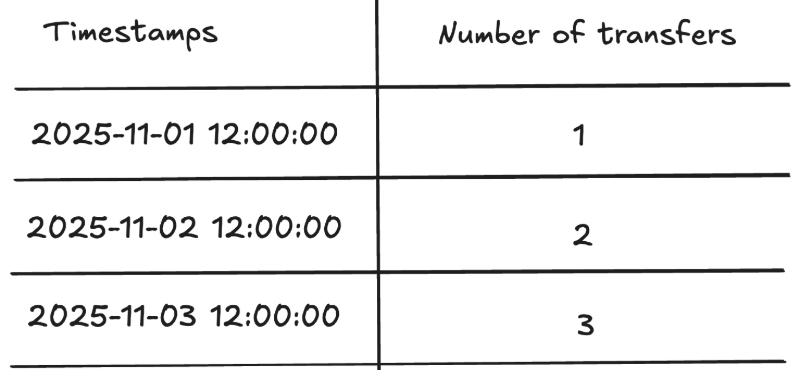

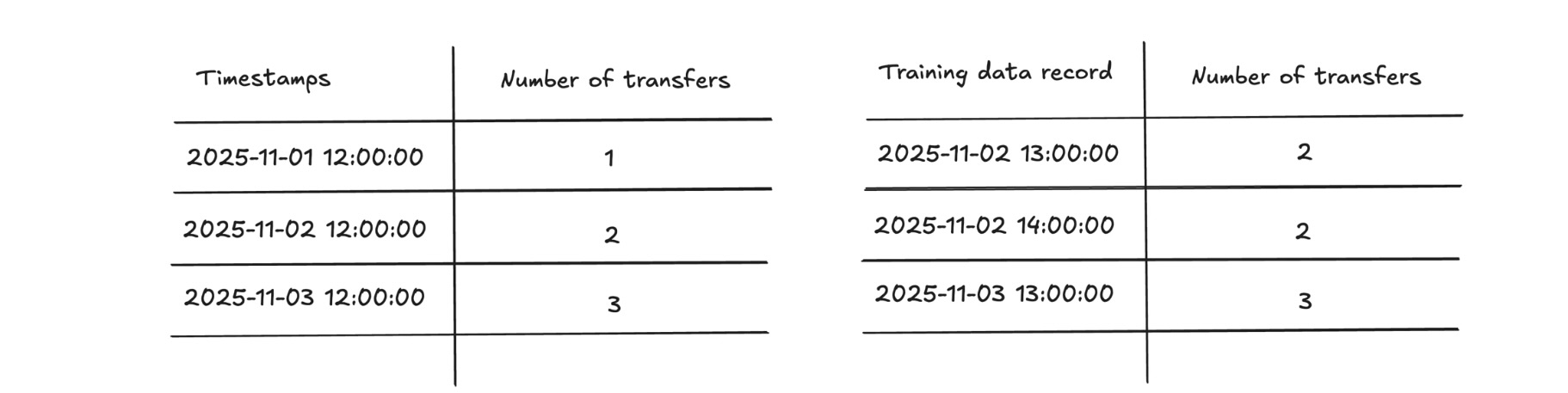

To better understand the problem, let's take a simple example. Consider a feature that represents how many transfers a customer has made. Over time, as the customer engages with the product, the counter will keep increasing.

While generating training data, it’s essential to only use the feature value that was available at the time of the record to avoid “peeking into the future“ and introducing data leakage.

Instead of filling in the value 3 everywhere, for historical records, 2 is populated.

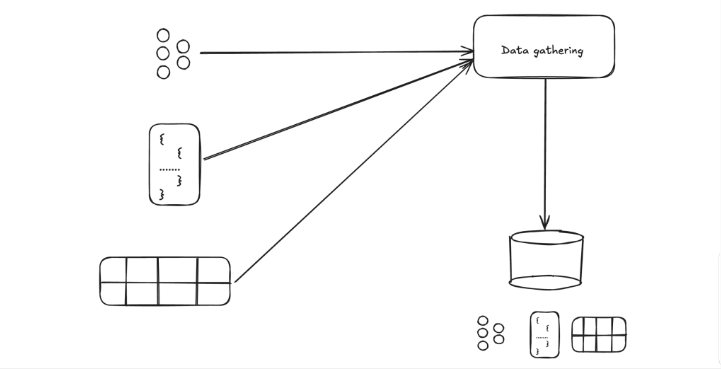

Mixed data format

As the product offerings at Wise grew, so did the data. New domains, databases, and technologies started appearing. Collecting data from different parts of a system became harder because each component produces data in different formats, structures, and meanings, and they evolve independently over time. Gathering and storing them in their original format requires very abstract structures, which makes further work with them complex, time-consuming, and fragile.

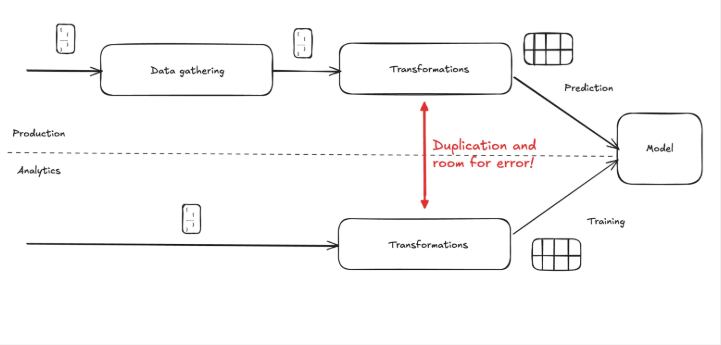

Using the above-mentioned data in training machine learning models requires transformations, as the training happens on tabular data format. This transformation layer can get quite complex and needs to be constantly modified whenever the production system evolves. For prediction, the model expects similar data that it was trained on. This creates duplicated work and room for error, as the same transformation logic needs to be implemented at two separate places, likely in two separate technologies.

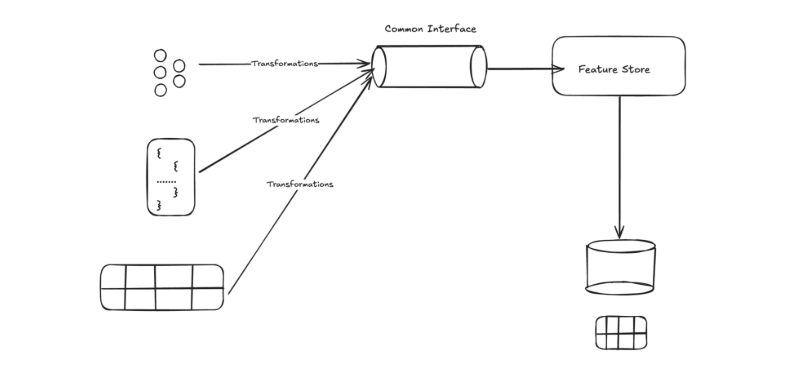

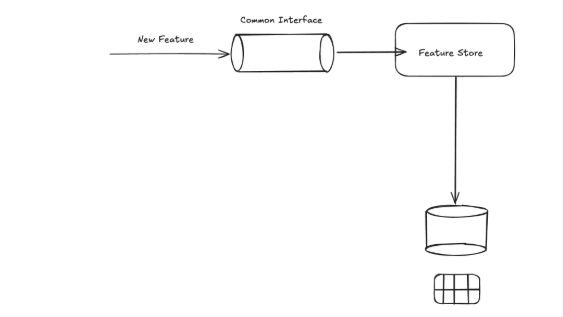

Pushing data preparation upstream and introducing feature store

Pushing data preparation upstream and introducing a feature store helps by standardizing data formats, schemas, and definitions closer to the source, reducing duplication and inconsistency downstream. Since data transformations are happening near the source data, it’s also easier to maintain. Engineers making changes to the source data can ensure that the transformation layer is also adapted, and since it’s in the same component, it’s less likely they are missing it. Introducing a feature store creates a single, trusted source of features that is easier to reuse, maintain, and evolve as the system grows.

The requirements that forced a rethink: online prediction vs offline training

Even though the domains are quite similar, we can distinguish two fundamentally different use cases for features — model training and prediction — each with different characteristics:

Online (prediction-time)

random access requests

low latency

latest feature values

running on production environment

Offline (training-time)

batch access

high throughput

point-in-time correct feature values

running on analytics environment

Trying to satisfy both by repeatedly re-deriving features downstream is where reliability and velocity suffer. The system needs a design that supports both use cases with shared definitions.

Feature foundations, not feature scripts

Moving data preparation upstream wasn’t just “move one pipeline.” It was about making feature data a platform capability with a few key building blocks.

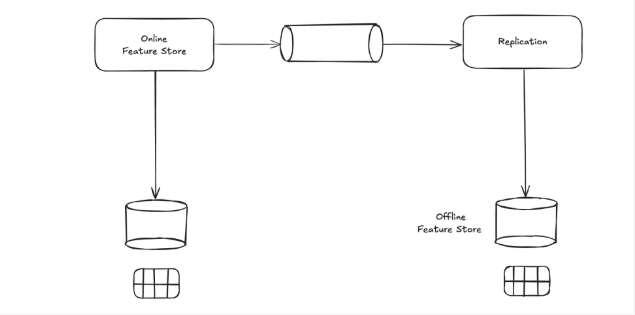

1) Online + Offline feature stores (with point-in-time replication)

Solving all the technical requirements for Prediction and Training use-cases with a single technology is challenging because the two workloads have fundamentally different characteristics, constraints, and optimization goals.

The prediction is more suited for databases. Technologies like indexing can help ensuring the random access requests can be served with low latency.

Training on the other hand works better on a data lake architecture. Storing large amounts of historical data there is better because it’s significantly more cost-efficient and scalable than traditional databases.

Splitting up the problem space and tackling the two problems on a searate platform is more sensible approach. It’s possible the leverage the advantages of both approaches by splitting up the problem to multiple components:

Online feature store: Storing latest values of the features. Optimized for low-latency reads with a database and has an API layer to serve features to serves on production.

Offline feature store: Not just the latest values, but also a history of the feature states. Built on data lake architecture, optimized for batch processing and historical analysis, with data versioning

Point-in-time correct replication: Between the two components ensuring offline store is also populated.

This matters because it makes “training-serving consistency” a product feature of the platform, rather than a hope-and-pray property of each model’s ETL.

2) A feature catalog that people actually use

To move fast safely, features must be:

well documented,

discoverable,

tied to an authoritative schema/source of truth.

That’s what a feature catalog provides—but only if it’s maintained continuously, like any other product surface, not treated as a one-off documentation sprint.

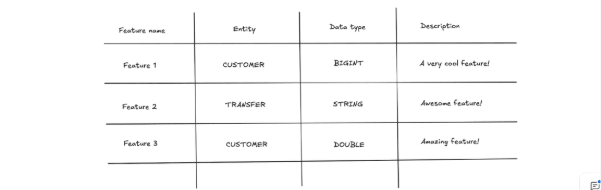

Let’s take an example of how a feature catalog could look like and what important attributes to include:

Entity: Specifies what entity the feature belongs to. Usually domain-specific, so it differs case-by-case what makes sense here.

Data type: It’s essential to specify what data type to expect for the features. It helps with the expectation and usage of the features, but more importantly, this can be used to create schemas in the system.

Description: Providing detailed descriptions of features can greatly improve reusability and discoverability. Ideally, it should contain important details about how the feature works, how it is calculated, restrictions to be aware of, and any additional information worth knowing.

3) Backfilling and feature evolution as first-class workflows

Adding a new feature to the feature store might seem like a trivial matter at first glance. One just needs to:

Add a new entry to the feature catalog

Start sending the new feature updates through the common interface

However, while this takes care of new feature values, it is just as important to also fill in the historical values.

Having proper backfilling flows and processes for filling historical values for the features is crucial for a well-functioning feature store.

Making it stick: monitoring, validation, and data contracts

A feature platform only helps if the data is trustworthy day after day. Wise treated this as an engineering problem, not a compliance checkbox:

Clear data contracts to align production and analytics expectations (especially data types and semantic alignment).

Automated validation and monitoring to detect breakages early and prevent silent skew.

The monitoring/validation suite focused on:

Availability

feature availability targets (e.g., >95% available)

feature non-zero targets (e.g., >90% non-zero)

Format validation

numeric thresholds

string length constraints

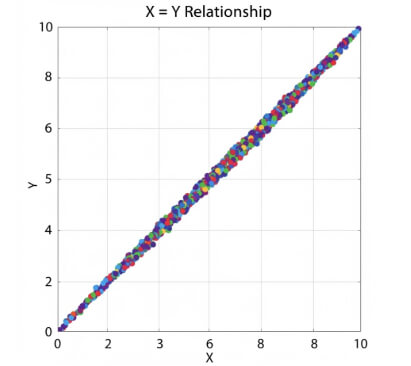

Data alignment checks

generate an expected dataset from a different datasource and do a 1:1 comparison

Drift detection

compare current distributions/characteristics to historical baselines

This turns “data” into “quality data”

Outcomes: reliability up, experimentation up, burnout down

Once data prep moved upstream and the foundations were in place, the impact showed up in both engineering metrics and team health:

Incidents dropped from 9 to 0 over a quarter

More experiments became feasible because feature work stopped being a custom pipeline project

More robust data reduced rework and firefighting

Efficiency spiked as the system became less dependent on heroic debugging

What we learned (and what we’d tell other teams)

This transformation wasn’t about a single tool. It was about choosing to pay the right cost upfront:

Treat data as a product

Automate manual steps

Design with downstream use in mind

Invest in contracts + monitoring early—before scale makes the cost unavoidable

Work across ENG <> PRODUCT <> DATA SCIENCE to keep the pipeline aligned with reality

Related blogs

Teaser

People profileContent Type

BlogPublish date

03/02/2026

Summary

"When you join, you’ll see your own growth mirrored by the growth of the company. You won't just be watching change happen; you’ll be a direct part of it." Ataro Shoji (He/Him) Payment

by

Verona Hasani

Teaser

People profileContent Type

BlogPublish date

06/27/2025

Summary

We sat down with SK Saraogi, Head of Expansion APAC, to discuss our strategic expansion into Hyderabad, India 🇮🇳Read more to discover why Hyderabad is the perfect location for our second glo

by

Verona Hasani

Teaser

People profileContent Type

BlogPublish date

06/06/2025

Summary

Driven by a passion for growth and team development, discover how Anna Pavlics advanced from Agent to Team Lead in Wise's Fraud Prevention team 🚀 "We put tremendous effort into bui

by

Verona Hasani

Teaser

People profileContent Type

BlogPublish date

02/18/2025

Summary

Head of Servicing Scale and Experience, Ian Rynne, discusses his journey from starting as a Customer Support agent to becoming the Head of Servicing Scale and Experience at Wise.

by

Verona Hasani

+(1).png)

Teaser

Our cultureContent Type

BlogPublish date

05/15/2024

Summary

My name is Cynthia. I'm a fraud agent at Wise, based in the Austin office. We strive to prevent fraudulent activity on the Wise platform. It can be tricky, as Wise supports many diff

+(1).jpg)

Content Type

BlogPublish date

01/24/2024

Summary

Wise’s volunteer day is not just a perk; it’s a celebration of community, camaraderie, and making a difference.Meet Claire Adelman, Customer Support Training Specialist, and Javier Perdo

.jpg)

Teaser

People profileContent Type

BlogPublish date

11/24/2022

Summary

Hi! My name is Delis and I’m a Due Diligence Agent (CDD) in our Tallinn team, focusing on business verification. What this means is that I onboard high risk businesses by assessing their r

.jpg)

Teaser

People profileContent Type

BlogPublish date

11/24/2022

Summary

Hi! My name is Rza Mustafayev and I’m a Due Diligence Agent in our Latin America & Middle-East and Africa Region, focusing on personal and business customers. What this means is that I:Rev